The Challenge

The current process for radiology reviews for oncology patients is that disease progression is evaluated by comparing Computerised Tomography (CT) scans. This process is time consuming and complicated, as the radiologist must go through every quadrant of the radiological anatomy to evaluate increased or decreased levels of cancer or new lesions. The difficulty of this task can result in missed pathologies. The rates of miss vary widely by lesion size and location, but in the abdomen for example, different radiologists may make different interpretations in up to 37% of cases. (Siewert, 2008)

It typically takes a radiologist 30 to 40 minutes to assess scans from a single patient, and scans can only be reviewed from one dimension at a time. One dimensional views can give a false perception of ”Static” or “Minimal change”, which in fact might be a ”significant change” if calculated in three dimensions.

Roke has recently developed a proof of concept that has the potential to speed up the analysis of CT scans to free up radiologists’ time and help identify tissue growth. This work was funded by the NHS AI Skunkworks team and was delivered in partnership with George Eliot Hospital (GEH) through the Accelerated Capability Environment (ACE).

The Approach

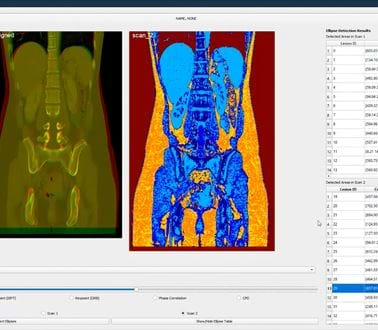

This 12-week research project focused on identifying features in a CT scan to automatically align scan "slices" to enable early detection and diagnosis of lesions for patients. The approach taken was to build a proof of concept Graphical User Interface (GUI) tool to deliver the following capabilities:

- Fast, automatic overlay of sequential CT scans, enabling users to compare lesion growth, in 2D and 3D easily

- Deal with changes in body shape caused by, for example breathing and body composition changes between scans

- Differentiate between different parts of the body (e.g. bone, organs, and lesions)

- Include automatic measurement of lesion size, in both 2D and 3D

- Identify new lesions not present in previous scans

A mixture of computer vision, machine learning, and deep learning techniques were explored, often containing novel methodological steps. The project included the unique application of multiple techniques to detect anomalies. This included; machine learning using textons for tissue sectioning, classical computer vision techniques using ellipsoid detection for lesion detection, and deep learning techniques, including the recently released Facebook self-supervised learning method, “DINO”, for lesion detection.

THE OUTCOME

This project has provided the opportunity to showcase the different capabilities available at the hands of data scientists to make a difference in healthcare using cutting edge AI techniques. It has shown the potential value AI could bring to the day-to-day working practises of radiologists by delivering automated tools for sequential CT scan overlay, automated lesion detection, measurement and reporting, and 3D visualisation of sequential CT scans. These provide the following benefits:

- Alignment & Overlay - Techniques to automate the alignment, overlay and visualisation of sequential CT scans

- Tissue Sectioning - The ‘texton’ classic computer vision technique was used to provide colour maps for different tissue types

- Automated Lesion Detection & Measurement - Automated detection, location reporting of potential lesions using AI, and 3D measurement of lesion(s) as shown below

- 3D Visualisation - Overlaying, aligning and displaying sequential CT scans in two different colours

Further work is required to develop promising AI techniques further beyond this 12 week proof of concept project, but it is clear the technology has the potential to provide a much needed ‘support tool’ needed by radiologists across the NHS.

Sid Singh, Chief Clinical Informatics Officer, George Eliot Hospital said:

“The diligence and commitment from Roke was consistent and of a very high level. In particular, the efforts to engage with clinicians and form a seamless clinician-developer team working during various iterations of the AI algorithm and its outputs were noteworthy, which then became the key to the final outcomes.”

Giuseppe Sollazzo, Head of AI Skunkworks, Deputy Director NHS AI Lab commented:

"This project has taken George Eliot Hospital even further along their AI journey. That's what's so valuable about co-producing these experiments with AI technology - the shared learning during each project doesn't end after the 12 weeks, we release open source code that continues the opportunity for experimentation beyond our NHS AI Lab Skunkworks project objectives."

Neil Gladstone Roke’s Futures Business Unit Director said:

“Roke relished the opportunity to use our cross industry expertise in machine vision and AI/ML in tackling this complex healthcare problem. Working with GEH and NHSX has been a great experience and we are excited to see how we can help improve patient outcomes in the future.”

Related news, insights and innovations

Find out more about our cutting-edge expertise.